Sophia Bano

UCL, United Kingdom

Robot vision and scene understanding for minimally invasive surgery.An ECCV workshop on the data bottleneck for robust medical imaging AI.

UCL, United Kingdom

Robot vision and scene understanding for minimally invasive surgery.

DKFZ / Heidelberg University, Germany

Surgical data science, benchmarking, and reproducible evaluation.

Universidad de Zaragoza, Spain

Visual SLAM, deformable SLAM for endoscopy, EndoMapper.

CUHK, Hong Kong

Medical AI across MICCAI, IPCAI, and ICRA.

Primary contact | Intuitive Surgical

iMED dataset lead | UCL Hawkes Institute

Profile

CLiMB2026 lead | Universidad de Zaragoza

Profile

SurgVU Challenge organizer | Intuitive Surgical

Vision system analyst | Intuitive Surgical

Computer Vision & Medical Imaging engineer | Intuitive Surgical

Research Scientist | Intuitive Surgical

Machine learning engineer | Intuitive Surgical

Featured advisor

Featured advisor

NCT Dresden professor working on surgical data science, computer-assisted surgery, robotic vision, and AI-enabled clinical translation.

Profile Invited speaker

Invited speakerUCL researcher focused on robot vision and scene understanding for minimally invasive surgery.

Invited speaker

Invited speakerDKFZ and Heidelberg University researcher in surgical data science, benchmarking, and reproducible evaluation.

Invited speaker

Invited speakerUniversidad de Zaragoza researcher known for visual SLAM, deformable SLAM for endoscopy, and EndoMapper.

Invited speaker

Invited speakerChinese University of Hong Kong researcher working on medical AI across clinical and surgical applications.

Advisor

AdvisorUCL Professor of Robot Vision, Co-Director of the UCL Hawkes Institute, and Royal Academy of Engineering Chair in Emerging Technologies.

Profile

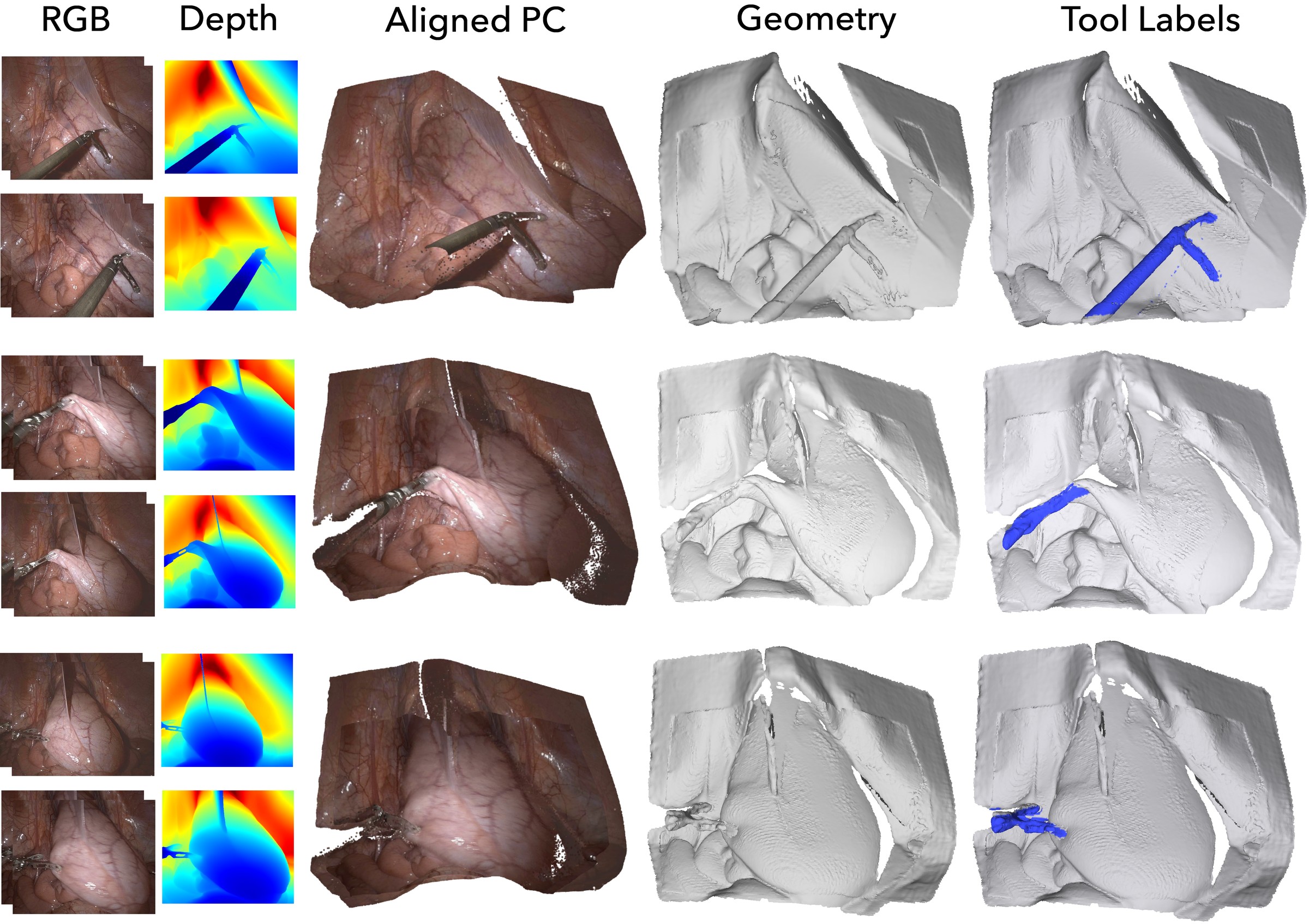

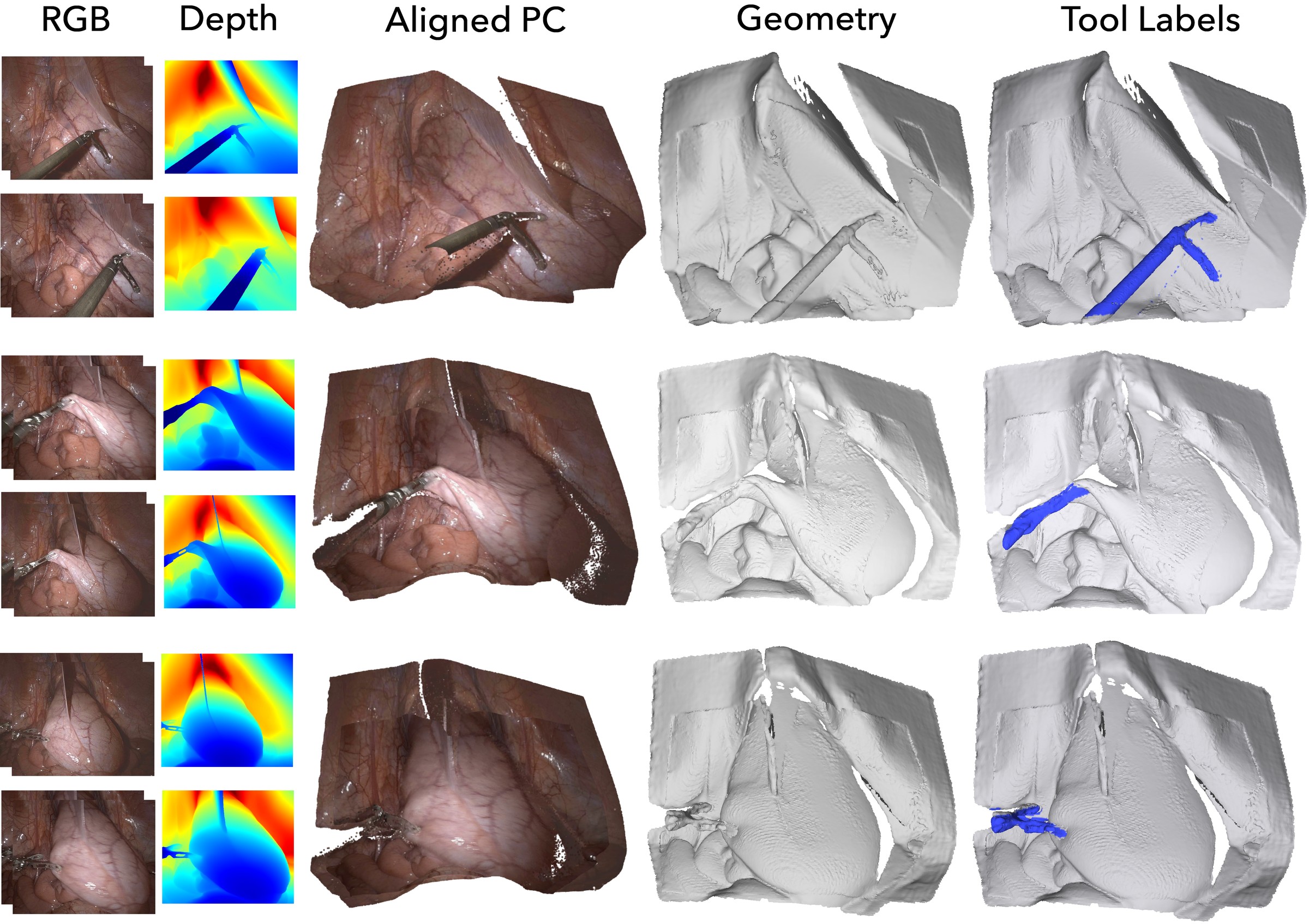

Multi-Endoscope Dataset for 3D Perception

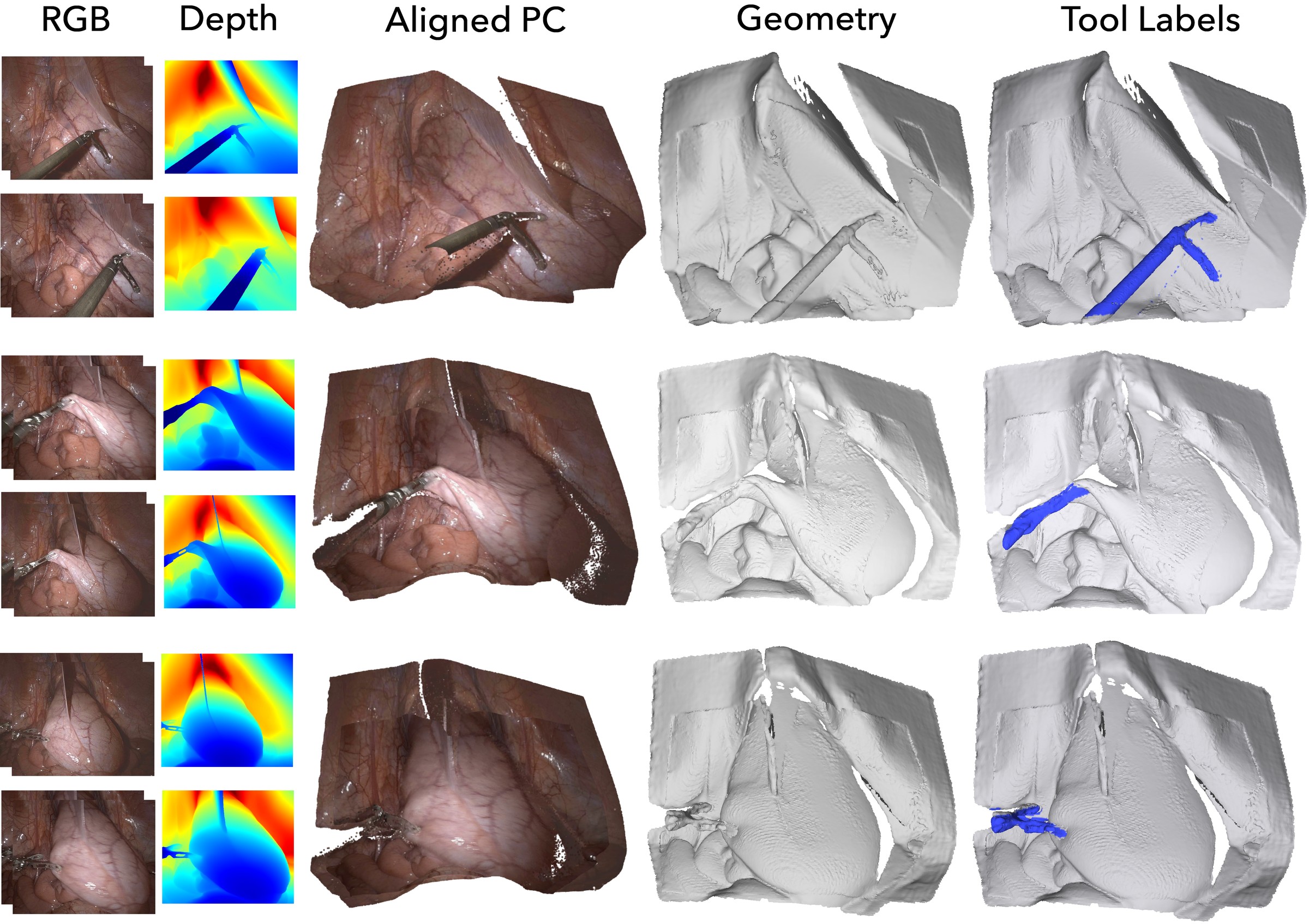

iMED2026 is a MICCAI/EndoVis challenge on synchronized multi-endoscope data, with relative pose estimation and deformable novel view synthesis tracks for endoscopic 3D perception.

Challenge site

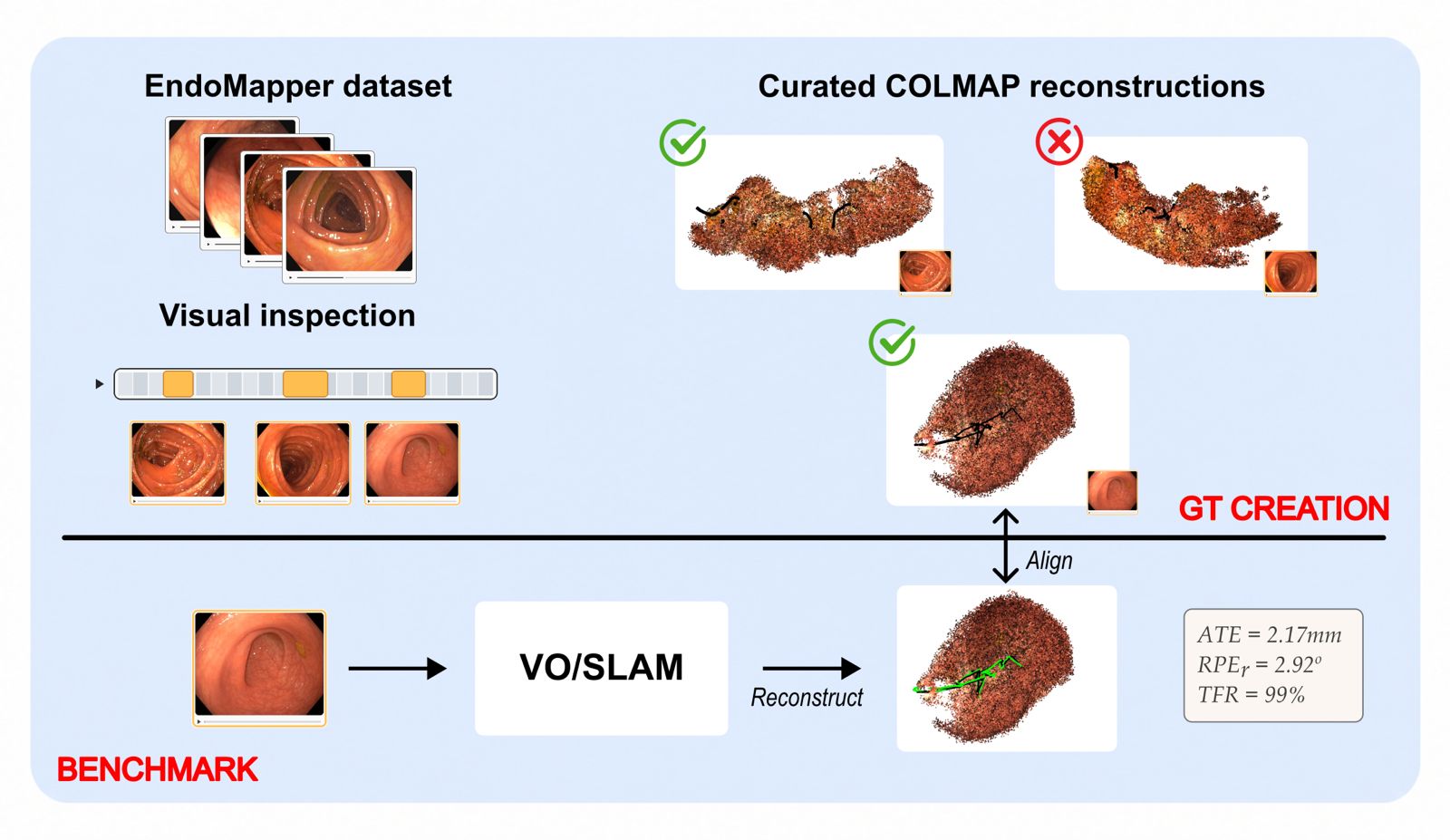

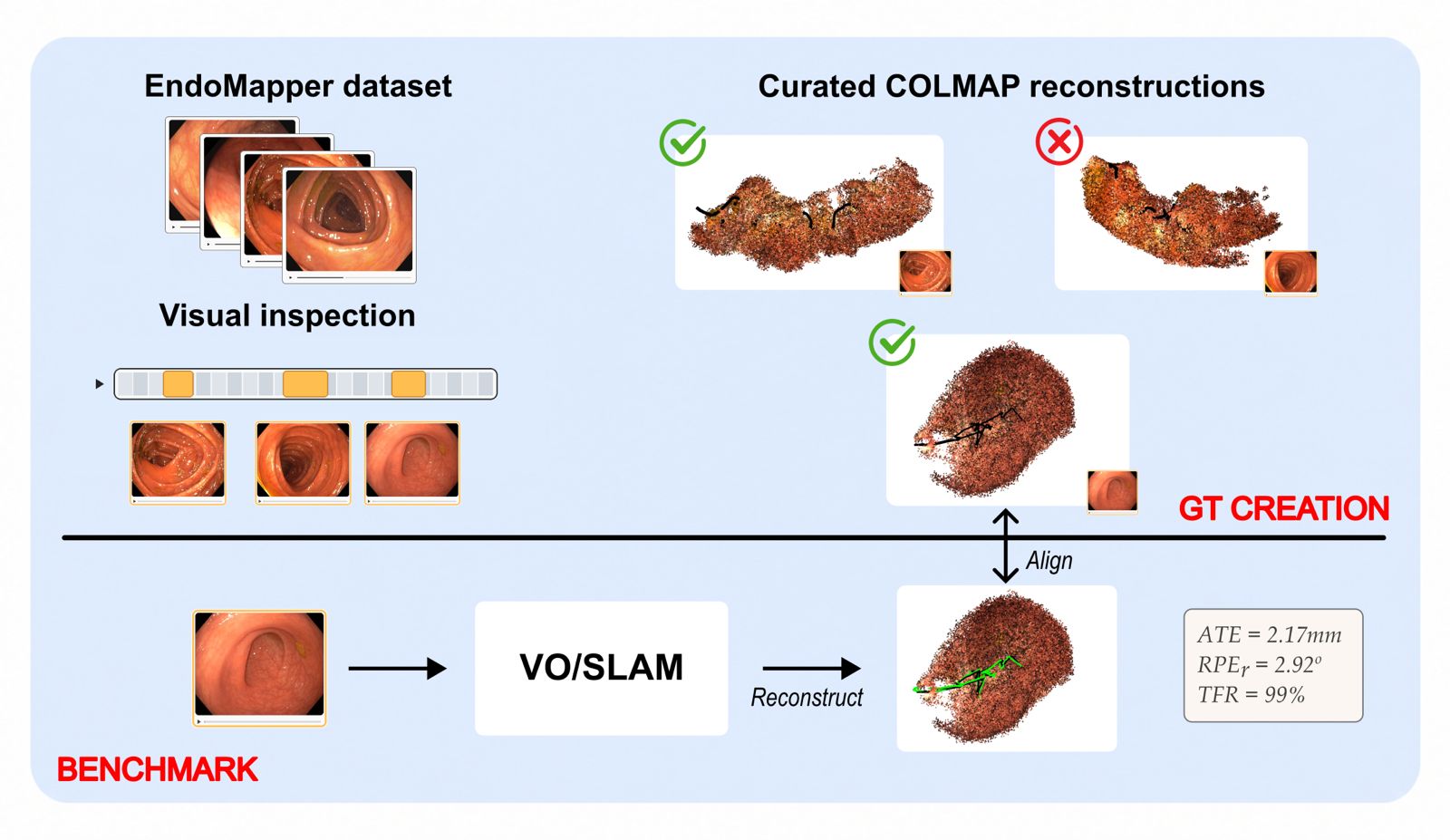

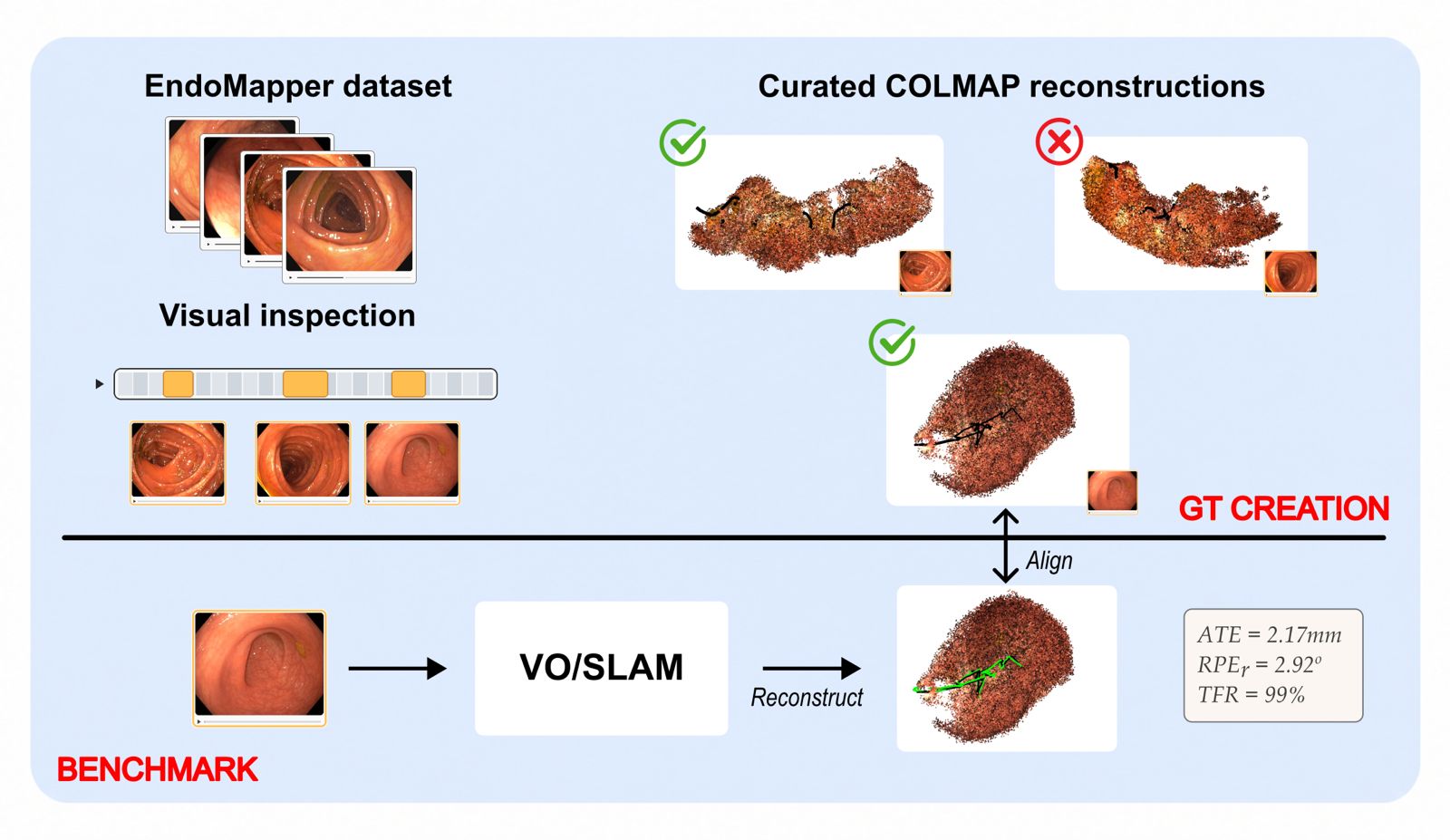

Colonoscopy Localization and Mapping Benchmark

CLiMB2026 is an unpublished benchmark under development for colonoscopy localization and mapping. Public dataset access, paper details, and benchmark statistics will be added after organizer review.

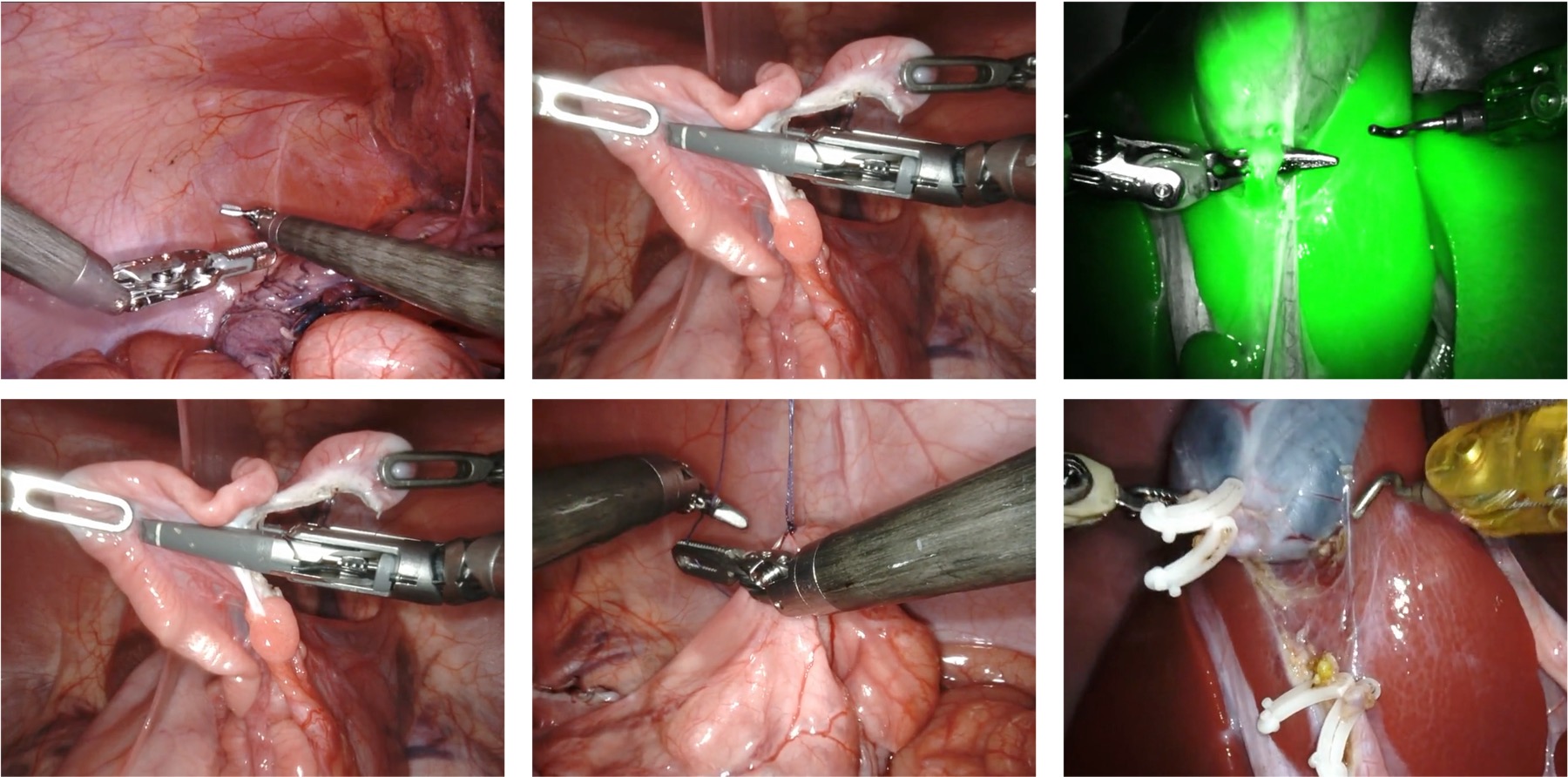

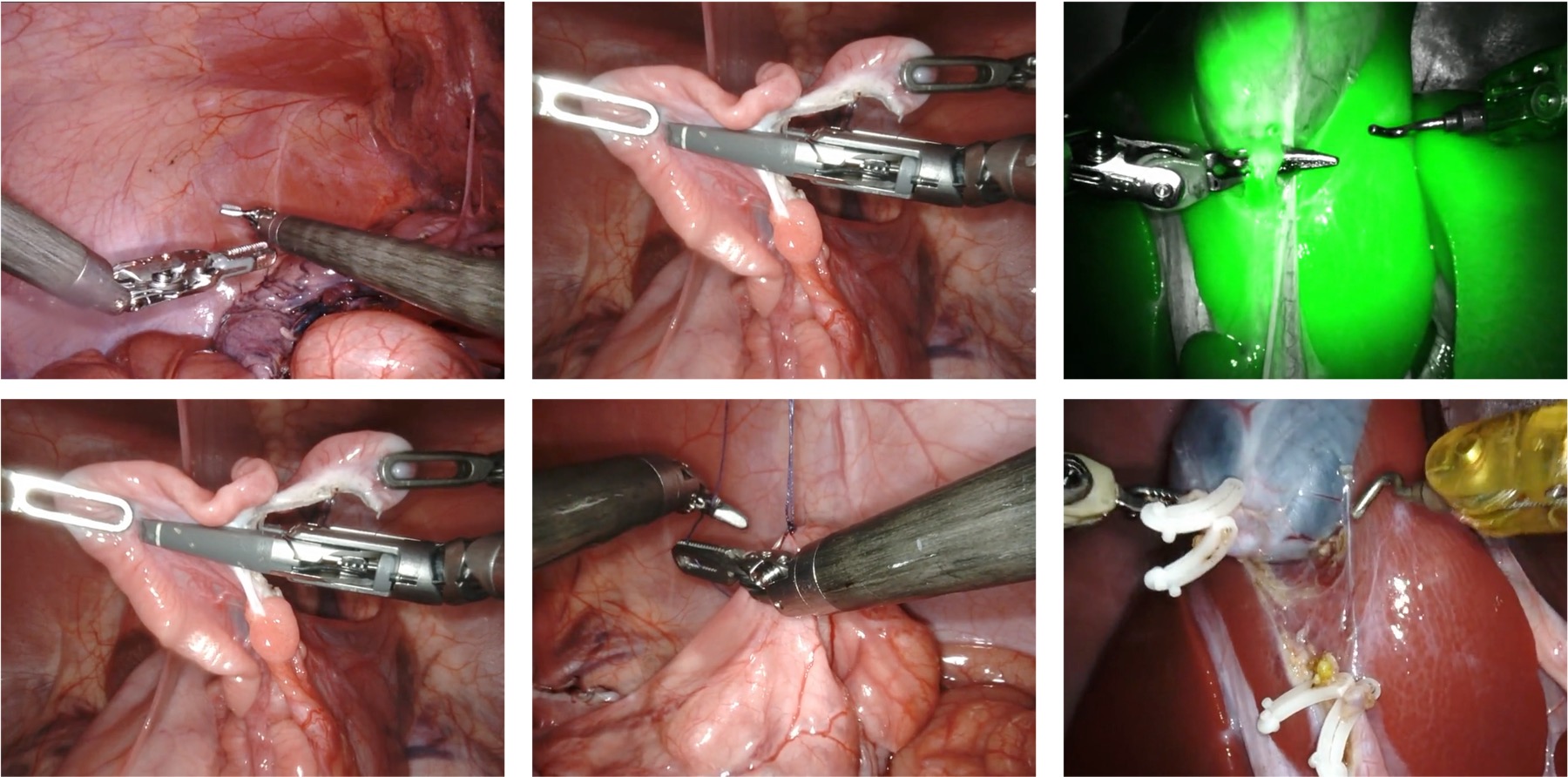

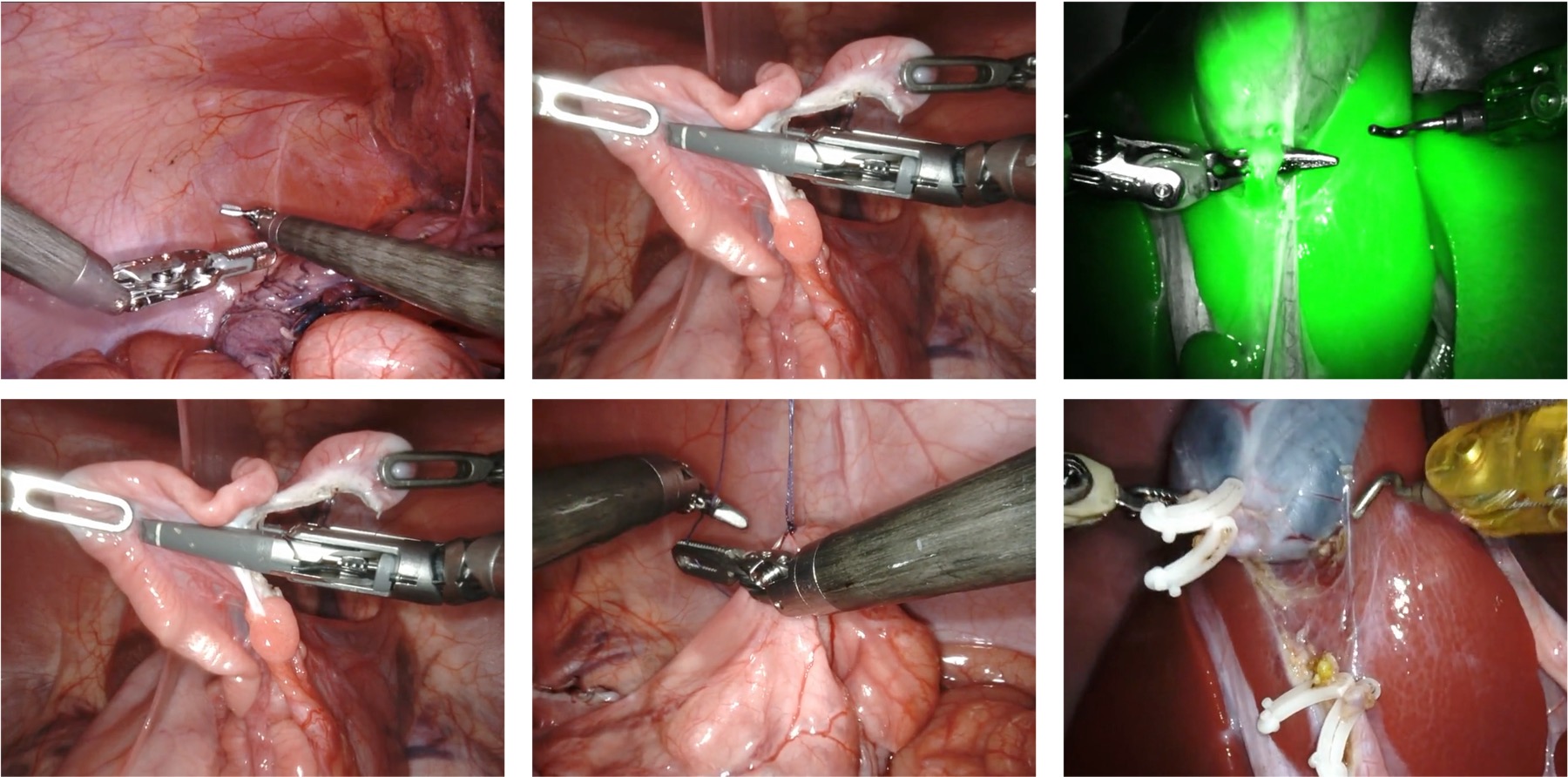

Surgical Visual Understanding Dataset Series

SurgVU focuses on large-scale surgical video understanding. DCA-MI highlights it as a dataset case study for real-world video curation and evaluation.

arXiv:2501.09209

Multi-Endoscope Dataset for 3D Perception

iMED2026 is a MICCAI/EndoVis challenge on synchronized multi-endoscope data, with relative pose estimation and deformable novel view synthesis tracks for endoscopic 3D perception.

Challenge site

Colonoscopy Localization and Mapping Benchmark

CLiMB2026 is an unpublished benchmark under development for colonoscopy localization and mapping. Public dataset access, paper details, and benchmark statistics will be added after organizer review.

Surgical Visual Understanding Dataset Series

SurgVU focuses on large-scale surgical video understanding. DCA-MI highlights it as a dataset case study for real-world video curation and evaluation.

arXiv:2501.09209New work prepared for DCA-MI in ECCV workshop format, reviewed through a double-blind OpenReview workflow by the program committee. Full papers, extended abstracts, and dataset or benchmark papers are welcome.

Relevant papers already peer-reviewed at major computer vision, machine learning, medical imaging, robotics, or clinical AI venues and journals. These submissions are screened for topical fit and poster availability rather than re-reviewed.

Primary contact | Intuitive Surgical

iMED dataset lead | UCL Hawkes Institute

Profile

CLiMB2026 lead | Universidad de Zaragoza

Profile

Machine Learning Engineer at Intuitive Surgical and primary organizer of the SurgVU Challenge at MICCAI 2026. He is also the primary author of SurgiSR4K, the first 4K surgical imaging and video dataset. He holds graduate degrees in Robotics and Computer Science from Johns Hopkins University and has extensive experience in computer vision, video analysis, and vision-language models for medical applications.

Vision system analyst at Intuitive Surgical. Her research interests are in computational imaging, specifically the joint design of optics and image processing. She has published in venues such as PNAS, Nature Communications, and CVPR.

Computer Vision & Medical Imaging engineer at Intuitive Surgical, specializing in advanced imaging and robotic-assisted procedures. CMU Robotics Institute alumnus; presenter and reviewer across IEEE TRO, IROS, CVPR, and ICML.

shuoqi.chen@intusurg.com

Research Scientist | Intuitive Surgical

Machine Learning Engineer at Intuitive Surgical, working at the crossroads of surgical data science and AI. His research focuses on real-time surgical guidance and surgeon skill assessment for eye surgery and robot-assisted procedures using multiple data modalities, including image, kinematics, and visual attention, through computer vision and deep learning. His interests also include the investigation of biased datasets in the surgical field.

Featured advisor

Featured advisor

Professor for Translational Surgical Oncology at NCT Dresden, working on surgical data science, computer-assisted surgery, robotic vision, and AI-enabled clinical translation.

Profile Robot vision

Robot vision

UCL researcher focused on robot vision and scene understanding for minimally invasive surgery.

Profile Surgical data science

Surgical data science

DKFZ and Heidelberg University researcher in surgical data science, benchmarking, and reproducible evaluation in medical AI.

Profile Visual SLAM

Visual SLAM

Universidad de Zaragoza researcher known for visual SLAM, ORB-SLAM, deformable SLAM for endoscopy, and the EndoMapper dataset.

Profile Medical AI

Medical AI

Chinese University of Hong Kong researcher working on medical AI across clinical and surgical applications, with recent work across MICCAI, IPCAI, and ICRA.

Profile Robot vision

Robot vision

UCL Professor of Robot Vision, Co-Director of the UCL Hawkes Institute, and Royal Academy of Engineering Chair in Emerging Technologies, focused on surgical robotics and AI for minimally invasive interventions.

Profile